Between Progress and Peril: The Role of Artificial Intelligence (AI) in Shaping Modern Political Communication

DOI:

https://doi.org/10.23947/2334-8496-2025-13-3-823-835Keywords:

Artificial intelligence (AI), Political Communication, Generative AI, Deepfakes, Disinformation, Large Language Models (LLMs)Abstract

Since artificial intelligence (AI) has been integrated into our digital communication landscape, there have been major changes in how political campaigns are strategically designed and how public opinion is influenced. With the help of machine learning (ML), generative models (GM) and natural language processing (NLP), AI tools have introduced new opportunities for political engagement. Today, thanks to AI-driven data analytics, we can micro-target voters based on their psychographic profiles and adapt political messages with incredible precision. On the other hand, generative AI technologies are increasingly used to spread false information or to imitate political endorsements, which has a great impact on public opinion. The dissemination of such content can greatly reinforce ideological prejudices and contribute to social divisions. This paper draws on recent empirical research and case studies to illustrate how AI-generated disinformation campaigns can affect electoral processes and undermine trust in democratic institutions. Various examples, such as the use of bots to control social media to deepfake content impersonating political figures, show that ethical, technological and legal safeguards are urgently needed. Furthermore, this paper supports an approach to AI governance that strikes a balance between promoting innovation and reducing harm. This implies the development of tools for AI detection, transparency measures and cooperation between sectors in order to promote responsibility and integrity of information. Greater digital literacy among citizens and proactive policy responses will be necessary in the near future to ensure the resilience of democratic systems due to the increasingly rapid development of AI technology.

Downloads

References

Alvarez, R. M., Eberhardt, F., & Linegar, M. (2023, July). Generative AI and the Future of Elections (CSSPP White Paper). California Institute of Technology Center for Science, Society, and Public Policy. https://lindeinstitute.caltech.edu/documents/25475/CSSPP_white_paper.pdf

Appel, M., & Prietzel, F. (2022). The detection of political deepfakes. Journal of Computer-Mediated Communication, 27(4), zmac008. http://dx.doi.org/10.1093/jcmc/zmac008 DOI: https://doi.org/10.1093/jcmc/zmac008

Baltezarević, V., Baltezarević, R., & Milovanović, S. (2014). Between the lines and through the images. Informatologija, 47(1), 29-35. https://hrcak.srce.hr/file/178309

Baltezarević, R., Baltezarević, B., Kwiatek, P., & Baltezarević, V. (2019). The impact of virtual communities on cultural identity. Symposion, 6(1), 7-22. https://doi.org/10.5840/symposion2019611 DOI: https://doi.org/10.5840/symposion2019611

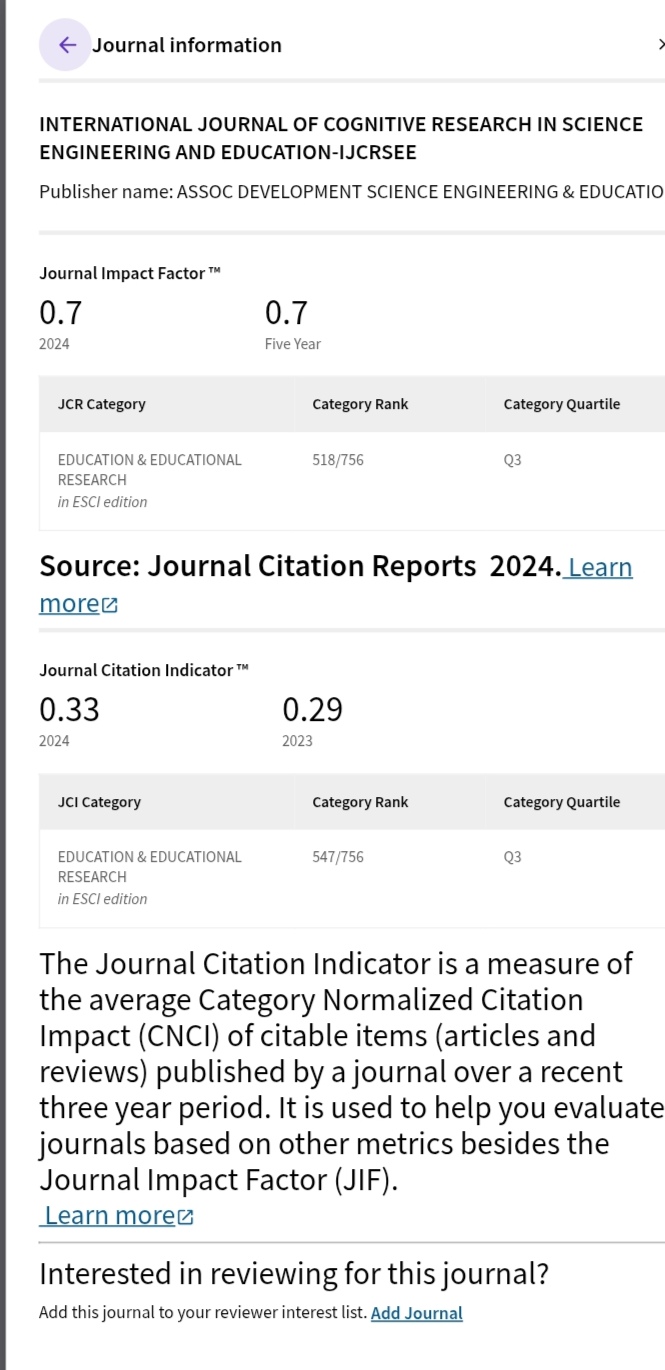

Baltezarević, R., & Baltezarević, I. (2024). Students’ Attitudes on The Role of Artificial Intelligence (Ai) In Personalized Learning. International Journal of Cognitive Research in Science, Engineering and Education (IJCRSEE), 12(2), 387-397. http://dx.doi.org/10.23947/2334-8496-2024-12-2-387-397 DOI: https://doi.org/10.23947/2334-8496-2024-12-2-387-397

Bareis, J., & Katzenbach, C. (2022). Talking AI into being: The narratives and imaginaries of national AI strategies and their performative politics. Science, Technology, & Human Values, 47(5), 855-881. https://doi.org/10.1177/01622439211030007 DOI: https://doi.org/10.1177/01622439211030007

Barclay D. A. (2018). Fake news, propaganda, and plain old lies: how to find trustworthy information in the digital age. Lanham, MD: Rowman & Littlefield. DOI: https://doi.org/10.5040/9798881821715

Battista, D., & Uva, G. (2023). Exploring the Legal Regulation of Social Media in Europe: A Review of Dynamics and Challenges—Current Trends and Future Developments. Sustainability, 15(5), 4144. https://doi.org/10.3390/su15054144 DOI: https://doi.org/10.3390/su15054144

Bessi, A., & Ferrara, E. (2016). Social Bots Distort the 2016 US Presidential Election Online Discussion. SSRN Electronic Journal. https://ssrn.com/abstract=2982233 DOI: https://doi.org/10.5210/fm.v21i11.7090

Bond, S. (2024, December). How AI deepfakes polluted elections in 2024. NPR. https://www.npr.org/2024/12/21/nx-s1-5220301/deepfakes-memes-artificial-intelligence-elections

Brady, W. J., Wills, J. A., Jost, J. T., Tucker, J. A., & Van Bavel, J. J. (2017). Emotion shapes the diffusion of moralized content in social networks. Proceedings of the National Academy of Sciences, 114(28), 7313–7318. http://dx.doi.org/10.1073/pnas.1618923114 DOI: https://doi.org/10.1073/pnas.1618923114

Bradshaw, S., & Howard, P. N. (2018). Challenging Truth and Trust: A Global Inventory of Organized Social Media Manipulation. Oxford University.

Brannon, W., Beeferman, D., Jiang, H., Heyward, A., & Roy, D. (2024). AudienceView: AI-assisted interpretation of audience feedback in journalism. arXiv. https://arxiv.org/abs/2407.12613 DOI: https://doi.org/10.1145/3678884.3681821

Brown, T., Mann, B., Ryder, N., Subbiah, M., Kaplan, J.D., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A. & Agarwal, S. (2020). Language models are few-shot earners. Advances in Neural Information Processing Systems, 33, 1877-1901. https://arxiv.org/abs/2005.14165

BSI (2025). Digression: Social Bots and Chatbots. Bundesamt für Sicherheit in der Informationstechnik. https://www.bsi.bund.de/EN/Themen/Verbraucherinnen-und-Verbraucher/Informationen-und-Empfehlungen/Onlinekommunikation/Soziale-Netzwerke/Sichere-Verwendung/Exkurs-bots/social-bots.html

Burkhardt, J. M. (2017). History of Fake News. Library Technology Reports, 53(8), 5-9. https://journals.ala.org/index.php/ltr/article/view/6497/8631

Carr, N. G. (2011). The shallows: What the internet is doing to our brains. W. W. Norton & Company.

CBS News. (2019, May). Doctored Nancy Pelosi video highlights threat of “deepfake” tech. https://www.cbsnews.com/news/doctored-nancy-pelosi-video-highlights-threat-of-deepfake-tech-2019-05-25/

Chang, T. (2025, April). The AI Bot Epidemic: The Imperva 2025 Bad Bot Report. Thales Group.https://cpl.thalesgroup.com/blog/access-management/ai-bots-internet-traffic-imperva-2025-report

Chawla, R. (2019). Deepfakes: How a pervert shook the world. International Journal of Advance Research and Development, 4(6), 4–8. https://www.semanticscholar.org/paper/Deepfakes-%3A-How-a-pervert-shook-the-world-Chawla/c3b3a6d27dbbfed4df630b39fc0a8a6692b1828a

Cheguri, P. (2023). Deepfake Technology: Concerns Raised in the Advertising Industries. Analytics Insight. https://www.analyticsinsight.net/topic/deepfake-technology

Chester, J., & Montgomery, K. C. (2017). The role of digital marketing in political campaigns. Internet Policy Review, 6(4), 1–20. http://dx.doi.org/10.14763/2017.4.773 DOI: https://doi.org/10.14763/2017.4.773

Citron, D. K., & Pasquale, F. (2019). The scored society: Due process for automated predictions. Washington Law Review, 89(1), 1–33. https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2376209

Coeckelbergh, M. (2022). The Political Philosophy of AI: An Introduction. John Wiley & Sons: New York, NY, USA.

Corney, D., Wilkinson, K., & Cann, R. (2024, June). The AI election: How Full Fact is leveraging new technology for UK general election fact checking. Full Fact. https://fullfact.org/blog/2024/jun/the-ai-election-how-full-fact-is-leveraging-new-technology-for-uk-general-election-fact-checking/

Crawford, K. (2021). The atlas of AI: Power, politics, and the planetary costs of artificial intelligence. Yale University Press. https://doi.org/10.12987/9780300252392 DOI: https://doi.org/10.12987/9780300252392

Crilley, R. (2018). International relations in the age of ‘post-truth’ politics. International Affairs, 94(2), 417-425. http://dx.doi.org/10.1093/ia/iiy038 DOI: https://doi.org/10.1093/ia/iiy038

Cruz, B. (2024, August). 2024 Deepfakes Guide and Statistics. Security. https://www.security.org/resources/deepfake-statistics/

Dudfield, A. (2025, August). How to stop AI chatbots going rogue. Full Facts. https://fullfact.org/technology/how-to-stop-ai-chatbots-going-rogue/

Ethayarajh, K., Xu, W., Muennighoff, N., Jurafsky, D., & Kiela, D. (2024). KTO: Model Alignment as Prospect Theoretic Optimization. arXiv. https://arxiv.org/abs/2402.01306

European Commission. (2021, April). Proposal for a Regulation of the European Parliament and of the Council Laying Down Harmonised Rules on Artificial Intelligence (Artificial Intelligence Act) and amending certain union legislative acts. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A52021PC0206

European Parliament. (2022, July). Digital Services: landmark rules adopted for a safer, open online environment. https://www.europarl.europa.eu/news/en/press-room/20220701IPR34364/digital-services-act-eu-rules-to-make-digital-platforms-safer

Factiverse. (2025, May). How Factiverse Scans the Web to Tackle Misinformation at Scale. https://www.factiverse.ai/blog/how-factiverse-scans-the-web-to-tackle-misinformation-at-scale

Ferrara, E., Varol, O., Davis, C., Menczer, F., & Flammini, A. (2016). The rise of social bots. Communications of the ACM, 59(7), 96–104. https://dl.acm.org/doi/10.1145/2818717 DOI: https://doi.org/10.1145/2818717

Fetzer, J.H., & Fetzer, J.H. (1990). What Is Artificial Intelligence? Springer. DOI: https://doi.org/10.1007/978-94-009-1900-6_1

Fullfact. (2025). Find and Fight Bad Information. https://fullfact.ai/about/

Funk, A., Shahbaz, A., & Vesteinsson, K. (2023, November). The Repressive Power of Artificial Intelligence. Freedom House.https://freedomhouse.org/report/freedom-net/2023/repressive-power-artificial-intelligence

Gallo, M., Fenza, G., & Battista, D. (2022). Information Disorder: What about global security implications? Rivista di Digital Politics, 2(3), 523-538. https://doi.org/10.53227/106458

Gilbert, D. (2025, June). AI Chatbots Are Making LA Protest Disinformation Worse. Wired. https://www.wired.com/story/grok-chatgpt-ai-los-angeles-protest-disinformation

Gillham, J. (2025, September). Grover AI Content Detection Review. Originality.ai https://originality.ai/blog/grover-ai-content-detection-review

Gillespie, T. (2018). Custodians of the Internet: Platforms, Content Moderation, and the Hidden Decisions That Shape Social Media. Yale University Press. DOI: https://doi.org/10.12987/9780300235029

Gorwa, R. (2019). The platform governance triangle: conceptualising the informal regulation of online content. Internet Policy Review, 8(2). https://doi.org/10.14763/2019.2.1407 DOI: https://doi.org/10.14763/2019.2.1407

Guess, A.M., Lerner, M., Lyons, B., Montgomery, J.M., Nyhan, B., Reifler, J., & Sircar, N. (2020). A digital media literacy intervention increases discernment between mainstream and false news in the United States and India. Proceedings of the National Academy of Sciences of the United States of America, 117(27), 15536-15545. https://doi.org/10.1073/pnas.1920498117 DOI: https://doi.org/10.1073/pnas.1920498117

Hagerty, A., & Rubinov, I. (2019, July). Global AI ethics: A review of the social impacts and ethical implications of artificial intelligence. arXiv. https://arxiv.org/abs/1907.07892

Hetrick. C. (2024, July). How to spot AI fake news – and what policymakers can do to help. USC Price School of Public Policy.https://priceschool.usc.edu/news/ai-election-disinformation-biden-california-europe/

Hu, L., Wei, S., Zhao, Z., & Wu, B. (2022). Deep learning for fake news detection: A compre-hensive survey. AI Open, 3, 133-155, https://doi.org/10.1016/j.aiopen.2022.09.001 DOI: https://doi.org/10.1016/j.aiopen.2022.09.001

Islam, M.B.E., Haseeb, M., Batool, H., Ahtasham, N., & Muhammad, Z. (2024). AI Threats to Politics, Elections, and Democracy: A Blockchain-Based Deepfake Authenticity Verification Framework. Blockchains 2024, 2(4), 458–481. https://doi.org/10.3390/blockchains2040020 DOI: https://doi.org/10.3390/blockchains2040020

Jiang, J., Ren, X., & Ferrara, E. (2021). Social Media Polarization and Echo Chambers in the Context of COVID-19: Case Study. JMIRx Med, 2(3), e29570 https://doi.org/10.2196/29570 DOI: https://doi.org/10.2196/29570

Kerly, R. (2020, August). How Deepfakes Are Changing Digital Marketing. Loop Digital. https://www.loop-digital.co.uk/the-rise-of-deepfake-technology/

Khare, Y. (2023, April). The Role of AI in Political Campaigns: Revolutionizing the Game. Analytics Vidhya. https://www.analyticsvidhya.com/blog/2023/04/the-role-of-ai-in-political-campaigns-revolutionizing-the-game/

Klinger, U., Kreiss, D., & Mutsvairo, B. (2023). Platforms, Power, and Politics: A Model for an Ever-changing Field. Political Communication Report, 27, 1-6. http://dx.doi.org/10.17169/refubium-39045

Konopliov, A. (2024, June). Key Statistics on Fake News & Misinformation in Media in 2024. Redline Digital. https://redline.digital/fake-news-statistics/

Korshunov, P., & Marcel, S. (2018, December). DeepFakes: a New Threat to Face Recognition? Assessment and Detection. arXiv. https://arxiv.org/abs/1812.08685

Kumar, A., & Garg, G. (2020). Systematic Literature Review on Context-Based Sentiment Analysis in Social Multimedia. Multimedia Tools and Applications, 79(21-22), 15349–15380. https://doi.org/10.1007/s11042-019-7346-5 DOI: https://doi.org/10.1007/s11042-019-7346-5

Kumar, S. (2025, May). Why Governments Worldwide Are Enacting Stricter AI Deepfake Regulations in 2025. Medium. https://medium.com/@meisshaily/why-governments-worldwide-are-enacting-stricter-ai-deepfake-regulations-in-2025-32a61309366c

Landrin, S. (2024, May). India’s general election is being impacted by deepfakes. LeMonde. https://www.lemonde.fr/en/pixels/article/2024/05/21/india-s-general-election-is-being-impacted-by-deepfakes_6672168_13.html

Lazaro Cabrera, L. (2024, May). AI Policy & Governance, European Policy, Free Expression. EU AI Act Brief – Pt. 3, Freedom of Expression. Center for Democracy & Technology. https://cdt.org/insights/eu-ai-act-brief-pt-3-freedom-of-expression/

Loth, A., Kappes, M., & Pahl, M.-O. (2024, April). Blessing or curse? A survey on the Impact of Generative AI on Fake News. arXiv. https://arxiv.org/abs/2404.03021

Lutkevich, B. & Hildreth, S. (2022, February). Social listening (social media listening). TechTarget. https://www.techtarget.com/searchcustomerexperience/definition/social-media-listening

Maheshwari, S. (2024, August). Brands Love Influencers (Until Politics Get Involved). The New York Times. https://www.nytimes.com/2024/08/12/business/media/influencers-politics-ai-analysis.html

Matheis, A. (2023). How can artificial intelligence be used in political communication? Wegewerk. https://www.wegewerk.com/en/blog/how-can-artificial-intelligence-be-used-in-political-communication/

Mermoud, A. (2017, August). The Power of Big Data and Psychographics in politics. Swiss Intell. https://swissintell.ch/the-power-of-big-data-and-psychographics-in-politics/

Murali, P., Hernandez, J., McDuff, D., Rowan, K., Suh, J., & Czerwinski, M. (2021, January). AffectiveSpotlight: Facilitating the communication of affective responses from audience members during online presentations. arXiv. https://arxiv.org/abs/2101.12284 DOI: https://doi.org/10.1145/3411764.3445235

NBC News (2023, December). Putin quizzed about AI and body doubles by his apparent deepfake. https://www.nbcnews.com/video/putin-quizzed-about-ai-and-body-doubles-by-his-apparent-deepfake-200210501620

Nida-Rumelin, J., & Weidenfeld, N. (2019). Umanesimo digitale: un’etica per l’epoca dell’Intelligenza artificiale. FrancoAngeli.

Noto, G. (2024, May). Scammers siphon $25M from engineering firm Arup via AI deepfake ‘CFO’. CFO Dive. https://www.cfodive.com/news/scammers-siphon-25m-engineering-firm-arup-deepfake-cfo-ai/716501/

Nunziata, F. (2021). Il platform leader. Rivista di Digital Politics, 1(1), 127-146. https://www.rivisteweb.it/doi/10.53227/101176

Obot, O. U., Attai, K. F., Onwodi, G. O., James, I., & John, A. (2025). Sentiment analysis of electronic word of mouth (E-WoM) on e‑learning. In M. Khosrow‑Pour (Ed.), Encyclopedia of Information Science and Technology (6th ed., ch. 57). IGI Global. https://doi.org/10.4018/978-1-6684-7366-5.ch057 DOI: https://doi.org/10.4018/978-1-6684-7366-5.ch057

Pamment, J., Nothhaft, H., & Fjällhed, A. (2018). Countering information influence activities: A handbook for communicators. Swedish Civil Contingencies Agency (MSB). https://rib.msb.se/filer/pdf/28697.pdf

Parry, R. (2006, December). The GOP’s $3 Bn Propaganda Organ. The Baltimore Chronicle. https://baltimorechronicle.com/

Pennycook, G., Cannon, T.D., & Rand, D.G. (2018). Prior exposure increases perceived accuracy of fake news. Journal of Experimental Psychology: General, 147(12), 1865-1880. https://doi.org/10.1037/xge0000465 DOI: https://doi.org/10.1037/xge0000465

Petrosyan, A. (2025, May). Potential influence of AI and deepfakes on upcoming elections 2024, by country. Statista. https://www.statista.com/statistics/1534957/global-potential-influence-ai-elections-by-country/

Political Communication. (2023). AI and Political Communication. Political Communication Report, 27(Spring). https://politicalcommunication.org/article/ai-and-political-communication/

Populismstudies. (2018). Filter Bubbles. ECPS. https://www.populismstudies.org/Vocabulary/filter-bubbles/

Rajashekhargouda, P. (2022). Sentimental Analysis on Amazon Reviews Using Machine Learning. In Karuppusamy, P., García Márquez, F.P., & Nguyen, T.N., (Eds.). Ubiquitous Intelligent Systems (pp. 467–477). Springer Nature Singapore. DOI: https://doi.org/10.1007/978-981-19-2541-2_37

Roberts, S. T. (2019). Behind the screen: Content moderation in the shadows of social media. Yale University Press. DOI: https://doi.org/10.12987/9780300245318

Roozenbeek, J., & van der Linden, S. (2020). Breaking Harmony Square: A game that “inoculates” against political misinformation. Harvard Kennedy School (HKS) Misinformation Review, 1(8). https://doi.org/10.37016/mr-2020-47 DOI: https://doi.org/10.37016/mr-2020-47

Samoili, S., López Cobo, M., Gómez, E., De Prato, G., Martínez-Plumed, F., & Delipetrev, B., (2020). AI watch. Defining artificial intelligence. Towards an operational definition and taxonomy of artificial intelligence (EUR 30117 EN). Publications Office of the European Union. https://doi.org/10.2760/382730

Schneider, B. (2017, June). How Vote Leave Used Data Science and A/B Testing to Achieve Brexit. AB Tasty. https://www.abtasty.com/blog/data-science-ab-testing-vote-brexit/

Seitz-Wald, A. (2024, February). A New Orleans magician says a Democratic operative paid him to make the fake Biden robocall. NBC News. https://www.nbcnews.com/politics/2024-election/biden-robocall-new-hampshire-strategist-rcna139760?_ga=2.181210351.976717714.1719011557-176973521.1719011550

Shen, T., Ruixian, L., Ju, B., & Zheng, L. (2018). ‘Deep Fakes’ Using Generative Adversarial Networks (GAN) (Report No. 16). Noiselab, University of California, San Diego. http://noiselab.ucsd.edu/ECE228_2018/Reports/Report16.pdf

Somers, M. (2020, July). Deepfakes, explained. MIT Sloan School of Management. https://mitsloan.mit.edu/ideas-made-to-matter/deepfakes-explained

Statista Research Department. (2024). U.S. adults worry about AI-generated political propaganda 2023. Statista. https://www.statista.com/statistics/1471069/us-adults-ai-generated-political-propaganda/

Surfshark. (2025). Deepfake statistics in early 2025: how frequently are famous people targeted? https://surfshark.com/research/study/deepfake-statistics

Sustainability Directory. (2025, May). How Effective Is Digital Literacy in Addressing Misinformation? https://sustainability-directory.com/question/how-effective-is-digital-literacy-in-addressing-misinformation/

Suzor, N. (2019). Lawless: The secret rules that govern our digital lives. Cambridge University Press. https://doi.org/10.1017/9781108666428 DOI: https://doi.org/10.1017/9781108666428

Tandoc, E. C., J., Lim, Z. W., & Ling, R. (2018). Defining “Fake News”: A typology of scholarly definitions. Digital Journalism, 6(2), 137–153. https://doi.org/10.1080/21670811.2017.1360143 DOI: https://doi.org/10.1080/21670811.2017.1360143

Thornhill, J. (2024, June). The danger of deepfakes is not what you think. The Straits Times. https://www.straitstimes.com/opinion/the-danger-of-deepfakes-is-not-what-you-think

Verma, P., & De Vynck, G. (2024, January). AI is destabilizing ‘the concept of truth itself’ in 2024 election. The Washington Post. https://www.washingtonpost.com/technology/2024/01/22/ai-deepfake-elections-politicians

Virginia Tech. (2024, February). AI and the spread of fake news sites: Experts explain how to counteract them. Virginia Tech News. https://news.vt.edu/articles/2024/02/AI-generated-fake-news-experts.html

Viudes, F. J. (2023). Revolucionando la política: El papel omnipresente de la IA en la segmentación y el targeting de campañas modernas. Más poder local, (53), 146-151. https://doi.org/10.56151/maspoderlocal.183 DOI: https://doi.org/10.56151/maspoderlocal.183

Vosoughi, S., Deb, R. & Aral, S. (2018). The spread of true and false news online. Science, 359(6380),1146-1151. https://www.science.org/doi/10.1126/science.aap9559 DOI: https://doi.org/10.1126/science.aap9559

Wakefield, J. (2019, November). Brittany Kaiser calls for Facebook political ad ban at Web Summit. BBC News. https://www.bbc.com/news/technology-50234144

Watson, A. (2024, January). Fake news in Europe - statistics & facts. Statista. https://www.statista.com/topics/5833/fake-news-in-europe/#topicOverview

Weforum (2024). Fake news undermines democracy, warns global survey. https://www.weforum.org/videos/influence-of-fake-news/

Westerlund, M. (2019). The emergence of deepfake technology: A review. Technology Innovation Management Review, 9(11), 40-53. https://doi.org/10.22215/timreview/1282 DOI: https://doi.org/10.22215/timreview/1282

Yu, C. (2024). How will AI steal our elections? (OSF Preprint, un7ev). Center for Open Science. https://doi.org/10.31219/osf.io/un7ev DOI: https://doi.org/10.31219/osf.io/un7ev

Zandt, F. (2024, March). How Dangerous are Deepfakes and Other AI-Powered Fraud? Statista Daily Data. https://www.statista.com/chart/31901/countries-per-region-with-biggest-increases-in-deepfake-specific-fraud-cases/

Zannettou, S., Sirivianos, M., Blackburn, J. & Kourtellis, N. (2019). The Web of False Information: Rumors, Fake News, Hoaxes, Clickbait, and Various Other Shenanigans. Journal of Data and Information Quality, 1(3), Article No. 10. https://doi.org/10.1145/3309699 DOI: https://doi.org/10.1145/3309699

Zellers, R., Holtzman, A., Rashkin, H., Bisk, Y., Farhadi, A., Roesner, F., & Choi, Y. (2019). Defending against neural fake news. Advances in neural information processing systems, 32. https://arxiv.org/abs/1905.12616

Published

How to Cite

Issue

Section

Categories

License

Copyright (c) 2025 Radoslav Baltezarević, Vladimir Lović, Ivana Baltezarević

This work is licensed under a Creative Commons Attribution 4.0 International License.

Plaudit

Accepted 2025-12-01

Published 2025-12-20